After a week of criticism over a its planned new system for detecting images of child sex abuse, Apple Inc. has said it will hunt only for pictures that have been flagged by clearinghouses in multiple countries.

That shift and others intended to reassure privacy advocates were detailed to reporters late last week in an unprecedented fourth background briefing since the initial announcement eight days prior of a plan to monitor customer devices, Reuters reported.

After previously declining to say how many matched images on a phone or computer it would take before the operating system notifies Apple for a human review and possible reporting to authorities, executives said on Friday it would start with 30, though the number could become lower over time as the system improves.

Apple also said it would be easy for researchers to make sure that the list of image identifiers being sought on one iPhone was the same as the lists on all other phones, seeking to blunt concerns that the new mechanism could be used to target individuals. The company published a long paper explaining how it had reasoned through potential attacks on the system and defended against them.

Apple acknowledged that it had handled communications around the program poorly, triggering backlash from influential technology policy groups and even its own employees concerned that the company was jeopardizing its reputation for protecting consumer privacy.

It declined to say whether that criticism had changed any of the policies or software, but said that the project was still in development and changes were to be expected.

Asked why it had only announced that the U.S.-based National Center for Missing and Exploited Children would be a supplier of flagged image identifiers when at least one other clearinghouse would need to have separately flagged the same picture, an Apple executive said that the company had only finalized its deal with NCMEC.

SRK, Aamir, Big B, Ted Sarandos, WPP CEO, MPA chief, other stars, to headline WAVES

SRK, Aamir, Big B, Ted Sarandos, WPP CEO, MPA chief, other stars, to headline WAVES  TIPS Music ends FY25 on high note with 29% revenue growth

TIPS Music ends FY25 on high note with 29% revenue growth  WAVES’ Bharat Pavillion to showcase Indian media’s evolution,culture

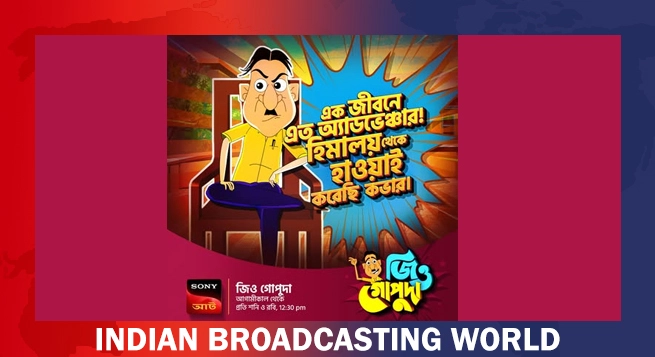

WAVES’ Bharat Pavillion to showcase Indian media’s evolution,culture  Sony AATH to launch ‘Jiyo Gopuda’ on April 26

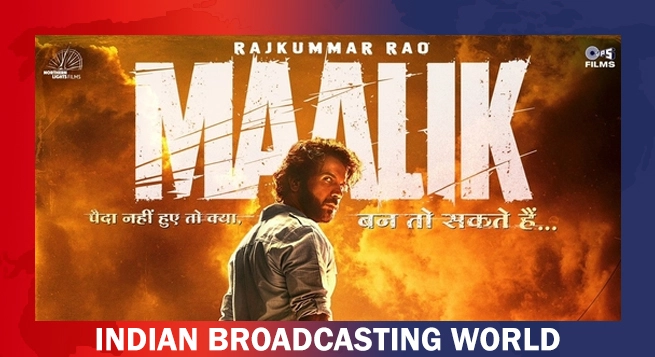

Sony AATH to launch ‘Jiyo Gopuda’ on April 26  Rajkummar Rao’s ‘Maalik’ gets new theatrical release date

Rajkummar Rao’s ‘Maalik’ gets new theatrical release date  Kriti Sanon joins Dreame as brand’s first-ever ambassador in India

Kriti Sanon joins Dreame as brand’s first-ever ambassador in India  YO YO Honey Singh, Stage Aaj Tak wrap up ‘Millionaire Tour’

YO YO Honey Singh, Stage Aaj Tak wrap up ‘Millionaire Tour’  Prime Video announces global premiere for ‘Crazxy’

Prime Video announces global premiere for ‘Crazxy’