Meta Platforms said yesterday it would hide more content from teens on Instagram and Facebook, after regulators around the globe pressed the social media giant to protect children from harmful content on its apps.

All teens will now be placed into the most restrictive content control settings on the apps and additional search terms will be limited on Instagram, Meta said in a blogpost, according to a Reuters report.

The move will make it more difficult for teens to come across sensitive content such as suicide, self-harm and eating disorders when they use features like Search and Explore on Instagram, according to Meta.

The company said the measures, expected to roll out over the coming weeks, would help deliver a more “age-appropriate” experience.

Meta is under pressure both in the United States and Europe over allegations that its apps are addictive and have helped fuel a youth mental health crisis.

In Europe, the European Commission has sought information on how Meta protects children from illegal and harmful content.

Children have long been an appealing demographic for businesses, which hope to attract them as consumers at ages when they may be more impressionable and solidify brand loyalty.

For Meta, which has been in a fierce competition with TikTok for young users in the past few years, teens may help secure more advertisers, who hope children will keep buying their products as they grow up.

MIB to unveil M&E sector statistical handbook today at WAVES

MIB to unveil M&E sector statistical handbook today at WAVES  WAVES 2025: Media dialogue backs creativity, heritage & ethics in AI Era

WAVES 2025: Media dialogue backs creativity, heritage & ethics in AI Era  Pay TV leaders chart course for India’s linear TV in digital age

Pay TV leaders chart course for India’s linear TV in digital age  Sudhir Chaudhary announces new show for DD News, says “Good content still has a place” at WAVES 2025

Sudhir Chaudhary announces new show for DD News, says “Good content still has a place” at WAVES 2025  India can lead global entertainment revolution: Mukesh Ambani

India can lead global entertainment revolution: Mukesh Ambani  TRAI chief not in favour of separate rules for OTT, legacy b’casters

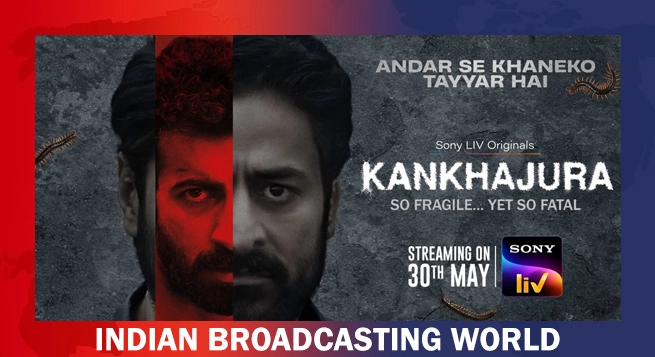

TRAI chief not in favour of separate rules for OTT, legacy b’casters  ‘KanKhajura’ start streaming on Sony LIV from May 30

‘KanKhajura’ start streaming on Sony LIV from May 30  Koyal.AI debuts at WAVES 2025, set to revolutionise music videos with GenAI

Koyal.AI debuts at WAVES 2025, set to revolutionise music videos with GenAI  Zee Cinema to premiere ‘Pushpa 2: The Rule’ on May 31

Zee Cinema to premiere ‘Pushpa 2: The Rule’ on May 31  ‘Create in India Challenge’ S1 honours global talent at WAVES

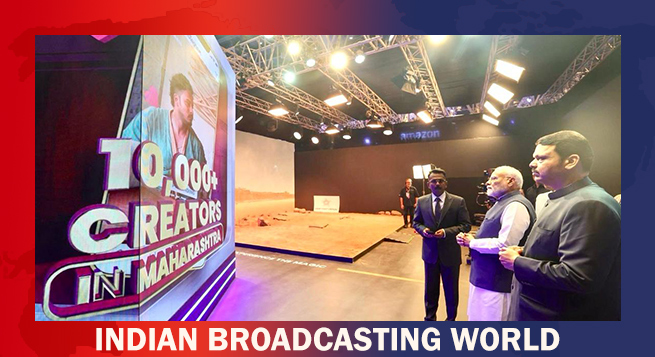

‘Create in India Challenge’ S1 honours global talent at WAVES  Amazon MX Player adds 20+ dubbed international titles

Amazon MX Player adds 20+ dubbed international titles